TL;DR:

- Many AI projects stall at pilot stage due to poor data quality and integration challenges.

- AI is most effective in well-defined, high-volume, low-risk use cases like anomaly detection.

- Success depends on data readiness, governance, and organizational discipline before scaling AI.

AI promises to transform IT operations, yet the gap between expectation and delivery is real. 87-89% of enterprises report that AIOps ROI met or exceeded expectations, but 62% of AI projects stall at pilot stage due to data quality issues. For IT managers and decision-makers in UK enterprises, this tension is the central challenge right now. This guide cuts through the noise. You will get a clear look at why AI matters in IT operations today, what it actually solves versus where it falls short, the real barriers standing between pilot programs and full-scale deployment, and a practical roadmap to move your organization forward with confidence.

Table of Contents

- Why AI matters in IT operations today

- What AI really does (and doesn't) solve in the IT stack

- Barriers to scaling AI in IT: What's stalling UK enterprises?

- From pilot to scale: Practical roadmap for UK IT leaders

- Why the most successful IT-AI journeys start with basics others neglect

- Take your IT operations to the next level with trusted AI expertise

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Start with proven use cases | Focus on scalable applications like anomaly detection before pursuing advanced automation. |

| Data quality is critical | Poor data causes most AI pilots in IT to stall—prioritize governance from day one. |

| Barriers are surmountable | With practical strategy and stakeholder alignment, UK enterprises can move AI from pilot to production. |

| AI amplifies human expertise | Success depends on blending automation with skilled IT teams and effective communication. |

Why AI matters in IT operations today

The pressure on IT teams has never been greater. Systems are more complex, threats evolve faster, and business leaders expect IT to be a driver of growth rather than a cost center. AI offers a genuine path from reactive support to proactive innovation, and that shift is exactly what UK enterprises are chasing.

The top motivators for adopting AI in IT operations consistently include:

- Reducing unplanned downtime through predictive monitoring and early fault detection

- Strengthening cybersecurity posture by identifying anomalies and responding at machine speed

- Optimizing resource allocation by forecasting demand and automating routine tasks

- Accelerating incident resolution so your team spends less time firefighting and more time building

These are not abstract ambitions. UK enterprises show high ROI in AIOps when solutions are deployed correctly, which explains why budget allocation for AI in IT is accelerating across sectors from financial services to manufacturing.

"The organizations seeing the strongest returns are those that align AI adoption with specific, measurable operational outcomes rather than broad transformation goals."

Yet the numbers also tell a more complicated story. Strong ROI figures coexist with widespread pilot paralysis. Many IT leaders invest in AI tooling, run a successful proof-of-concept, and then find that scaling the initiative hits walls they did not anticipate. Understanding the technology trends for transformation shaping this space is important, but it is equally critical to understand why so many promising projects stall before they create real business value. The fundamental case for AI in IT is strong. The execution, however, requires a different kind of discipline, one built on data readiness, governance, and realistic expectations. Recognizing where digital transformation efficiency actually comes from is the first step toward getting it right.

What AI really does (and doesn't) solve in the IT stack

There is a lot of optimism about what AI can do inside a modern IT environment. Some of it is justified. Some of it is not. Being clear-eyed about the difference saves you from expensive missteps.

Only a narrow set of operational use cases are reliably scaling in UK enterprises due to integration and skills challenges. Here is a direct comparison of where AI delivers versus where expectations tend to outrun reality:

| Use case | AI performance today | Maturity level |

|---|---|---|

| Anomaly detection in system logs | High reliability, strong ROI | Production-ready |

| Predictive maintenance for hardware | Good results with clean data | Production-ready |

| Automated incident triage | Reliable for known issue patterns | Production-ready |

| Self-healing infrastructure | Partial, narrow scenarios only | Early-stage |

| Autonomous network management | Limited, high governance risk | Experimental |

| Full agentic IT operations | Not yet reliable at scale | Aspirational |

The pattern here is important. AI performs well when the problem is well-defined, the data is clean, and the volume is high. It struggles when processes are poorly documented, data is siloed, or governance structures are absent.

Human expertise remains essential in several areas:

- Interpreting AI-surfaced insights and deciding on action

- Managing exceptions that fall outside trained model parameters

- Governing AI behavior to prevent automated errors compounding at scale

Reviewing solid infrastructure tips for success will show you how foundational architecture decisions directly affect what AI can accomplish in your environment. Your IT infrastructure options also determine which AI tools will integrate cleanly and which will create new friction.

Pro Tip: Start your AI journey with high-volume, low-risk use cases such as log analysis or alert correlation. These deliver measurable value quickly and build the internal confidence and data discipline needed for more ambitious projects later.

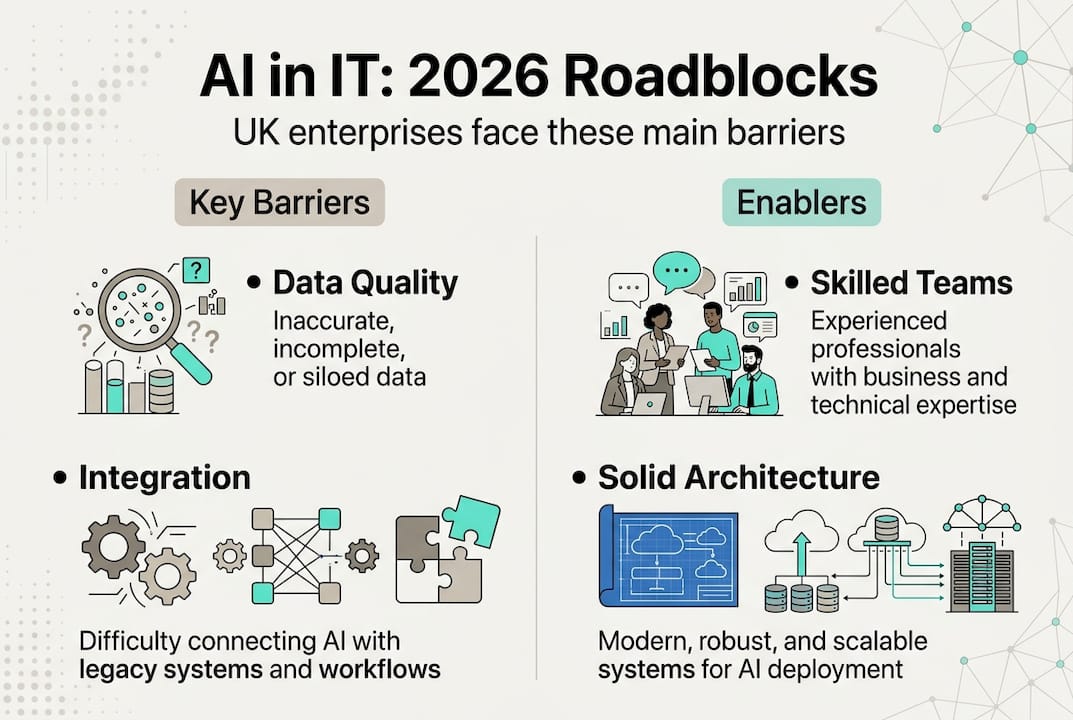

Barriers to scaling AI in IT: What's stalling UK enterprises?

The path from a successful pilot to a scaled AI deployment is where most organizations lose momentum. Understanding exactly why helps you avoid the same traps.

62% of AI projects remain stuck in pilots largely due to data quality issues. Poor data is not just an inconvenience. It corrupts model outputs, erodes trust in AI recommendations, and eventually causes teams to abandon initiatives entirely.

The UK-specific challenges run deeper than data alone. Integration difficulties affect 59% of UK enterprises, and skills gaps mean that even organizations with the budget to deploy AI often lack the internal capability to run and evolve it.

The main blockers, ranked by frequency, include:

| Barrier | Impact level | Common symptom |

|---|---|---|

| Poor data quality and governance | Critical | Model outputs are unreliable or contradictory |

| Legacy infrastructure integration | High | AI tools cannot access operational data cleanly |

| Shortage of AI and ML skills | High | Pilots succeed but cannot be maintained or improved |

| Cultural resistance | Medium | Teams distrust or ignore AI recommendations |

| Unclear ownership and governance | Medium | No one accountable for AI model performance |

"Data is the foundation. You cannot build reliable AI capabilities on fragmented, inconsistent operational data, regardless of how sophisticated your tooling is."

Here are three practical steps to address the top blockers:

- Audit your data estate first. Before selecting an AI tool, map your data sources, assess quality, and identify gaps. This work is unglamorous but decisive.

- Build an integration layer. Invest in middleware or APIs that allow AI tools to access data from legacy systems without requiring a full infrastructure overhaul.

- Create an AI governance function. Assign clear ownership of AI performance, model drift, and output quality. Without accountability, AI deployments degrade over time.

Learning how to manage IT transformation effectively and applying proven IT management strategies will significantly improve your odds of breaking through these barriers.

From pilot to scale: Practical roadmap for UK IT leaders

Moving AI from experiment to production requires more than technical skill. It requires organizational discipline and a clear sequence of decisions.

Prioritize data quality and start with high-volume, low-risk use cases such as anomaly detection. This principle should anchor your entire roadmap.

Here is a focused action plan:

- Assess your operational baseline. Measure current incident volume, mean time to resolution, and false positive rates. You need a baseline to prove AI impact later.

- Govern your data. Establish data ownership, quality standards, and access controls before deploying any AI model. This step alone prevents most pilot failures.

- Select a focused prototype use case. Pick one high-volume, well-understood problem. Alert fatigue reduction or log anomaly detection are proven starting points.

- Run the prototype with defined success criteria. Set measurable targets upfront. If the prototype does not hit them, learn from that before expanding.

- Scale incrementally. Expand to adjacent use cases using the governance structures and data discipline built in earlier phases.

- Measure and communicate results. Translate technical outcomes into business language. Downtime reduced, costs avoided, and engineer hours freed are metrics your stakeholders care about.

Effective optimizing IT workflows practices give your AI tools cleaner processes to work with, multiplying their impact. Studying UK transformation examples from peer organizations shows what scaled success actually looks like in practice. Strong IT monitoring best practices are also foundational, because AI-powered monitoring only improves on a solid monitoring baseline.

Pro Tip: Designate a cross-functional AI steering group that includes IT, finance, and a business unit lead. Regular updates to this group build the organizational trust that turns a one-time pilot into a sustained program.

The most common mistake at this stage is rushing toward more advanced automation before the foundational use cases are proven and stable. Patience here is a competitive advantage.

Why the most successful IT-AI journeys start with basics others neglect

Here is the uncomfortable truth most vendors will not tell you: the enterprises achieving the strongest, most durable AI outcomes are not the ones that deployed the flashiest technology. They are the ones that spent six months cleaning their data, documenting their processes, and building governance structures before writing a single line of AI configuration.

Consider a mid-sized UK financial services firm that invested heavily in an agentic AI platform, only to find that the system's recommendations were based on incomplete log data from three siloed legacy systems. The project was paused after 18 months. A different organization in the same sector focused first on standardizing event data across all monitoring tools, then deployed a straightforward anomaly detection model. Within 12 months, they reduced false positive alerts by 40% and freed up significant engineer capacity.

The lesson is not that ambition is wrong. It is that high-volume, low-risk use cases create the proof points and organizational muscle memory that make ambitious projects viable later. Tracking the right business technology trends matters, but the fundamentals are what actually drive results. The unglamorous work is where competitive advantage is built.

Take your IT operations to the next level with trusted AI expertise

Putting AI to work in IT operations is one of the highest-leverage moves available to UK enterprises right now, but getting it right requires more than good intentions and the right software licenses.

At MightySkyTech, we work with IT leaders across medium and large UK enterprises to design, govern, and scale AI-driven IT operations that deliver measurable outcomes. From data readiness assessments and infrastructure alignment to full AIOps deployment and ongoing managed support, we bring both the strategy and the hands-on capability your team needs. If you are ready to move beyond pilot projects and build AI operations that actually stick, explore AI IT solutions from MightySkyTech and let's talk about what is possible for your organization.

Frequently asked questions

What is the most valuable AI use case in IT operations?

Anomaly detection in system monitoring is the most scalable and reliable starting point, delivering clear ROI with manageable risk. Experts consistently recommend starting with anomaly detection before expanding to more complex automation.

Why do so many AI projects in IT fail to scale?

Poor data quality, integration challenges, and skills gaps are the primary culprits. 62% of AI projects stall in pilot, and integration difficulties affect 59% of UK enterprises attempting to scale.

How long does it take to scale AI in IT operations?

Transitioning from pilot to scaled implementation typically requires 12 to 24 months, depending on data readiness, infrastructure maturity, and organizational buy-in from both IT and business leadership.

Are advanced, agentic AI solutions reliable today?

Not for most UK enterprises. Only narrow use cases scale reliably in production environments today, while advanced agentic AI typically faces significant integration and governance barriers that limit real-world reliability.